Robotic-handover-tasks-The-semantic-objects-information-is-provided-by-VLM-and-LLM

Robotic-handover-tasks-The-semantic-objects-information-is-provided-by-VLM-and-LLMAbstract

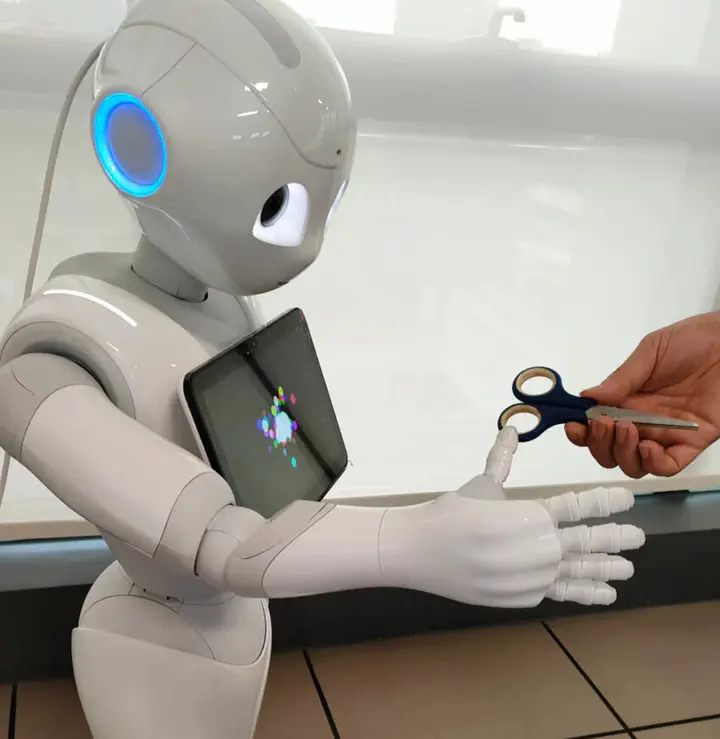

We are utilizing a combination of Large Language Model (LLM) and Vision Language Model (VLM) to perform a robot-to-human handover task with semantic object knowledge. Current object perception systems for this task often work with a fixed set of objects and primarily consider geometric properties, neglecting semantic knowledge about where or where not to grasp an object. By applying LLM and VLM in a zero-shot fashion, we demonstrate that our approach can identify optimal and semantically correct handover parts for both the robot and the human in this handover task. We validate our approach quantitatively across several object categories.

Add the publication’s full text or supplementary notes here. You can use rich formatting such as including code, math, and images.